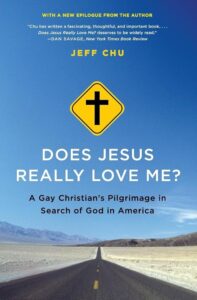

Does Jesus Really Love Me?

by Jeff Chu | Library: Newbooks | ~470 pages

INTRODUCTION

Introduction: Does Jesus Love Me?

Chu introduces the central question through the childhood hymn “Jesus Loves Me,” which he sang with his Chinese Baptist grandparents in Berkeley. He recounts a pivotal moment at his Christian high school when a beloved teacher, Mr. Byers, was publicly outed in a chapel assembly—an event that taught Chu his own homosexual feelings must remain hidden. Now thirty-five, gay, and a journalist, Chu describes his Baptist upbringing, his mother’s anguish, and his inability to stop believing in God despite deep doubt. He frames the book as a pilgrimage across America, interviewing over three hundred people to understand why Christians, starting from the same faith, reach radically different conclusions about homosexuality.

PART I: DOUBTING

Chapter I: Beginnings — In the Capital of Christian America (Nashville, Tennessee)

Chu lands in Nashville amid a cicada invasion, using it as a metaphor for the city’s battleground status over homosexuality. He profiles Lisa Howe, the Belmont University soccer coach forced out after coming out, whose story became a flashpoint for Tennessee’s “Don’t Say Gay” legislation. He interviews Southern Baptist leader Richard Land, who calls homosexuality “a pretty sad lifestyle,” and pastor David Shelley, who sees it as “the biggest threat to our civilization.” Chu contrasts these hardline voices with megachurch pastor Pete Wilson, who welcomed a lesbian couple’s child for dedication despite traditional beliefs. Howe’s unexpected turn toward faith after her dismissal—finding a welcoming church home—reverses the typical narrative of gay people leaving Christianity.

Chapter II: The Agnostics (New York; Bangor, Maine)

Chu explores loss of faith among gay Christians through three profiles. John Hauenstein suppressed his sexuality through ex-gay ministry for years; when he finally accepted it, his entire church community cut him off. He no longer identifies as Christian but keeps his Bibles on the shelf as a symbol of unfinished spiritual business. Andrae Gonzalo, a Project Runway alum raised Pentecostal, watched his faith erode through intellectual questioning; he now identifies as a nontheist, comparing his experience to being a minor courtier at Versailles. Michael Dean Gray lives a double life between his Pentecostal parents’ home and the gay community—standing silent during “In Christ Alone” at church, underscoring the gulf between the hymn’s promise and the lived reality of gay believers.

Oral History: Josh Cook

Josh Cook, raised in a mixed Catholic-evangelical household, traces his journey from fervent believer to secular humanist. His coming-out at fourteen led his father’s church to pressure him toward conversion therapy, which his father ultimately rejected. A subsequent experience in a personality-cult church eroded his trust in religious institutions. Though moved by a powerful Eucharistic experience, Cook’s intellectual rigor gradually dismantled his supernatural beliefs. He now values religious culture’s ethical impulses and admits he misses praying, expressing gratitude that being gay sensitized him to injustice.

Chapter III: Yes, Jesus Hates You — Westboro Baptist Church (Topeka, Kansas)

Chu travels to Topeka to confront the notorious Westboro Baptist Church, whose “God Hates Fags” messaging has made it America’s most reviled congregation. He shadows members on their Sunday picketing route and observes young children carrying signs reading “FAGS DOOM NATIONS.” Members justify their protests as acts of neighborly love, citing Leviticus 19:17. Chu describes the church’s domestic sanctuary—mauve carpet and wood-veneer paneling juxtaposed against posters declaring “GOD HATES FAGS”—and notes the theological overlap between Westboro’s Calvinist underpinnings and mainstream conservative Christianity. He meets the aged, ailing Fred Phelps, whose office displays both his Eagle Scout badges and an NAACP plaque alongside anti-gay posters. The chapter is marked by Chu’s personal fear—not of physical harm, but of discovering that Westboro’s theology might be internally consistent.

Chapter IV: The Power and the Story — The Scandal of the Harding University Queer Press (Searcy, Arkansas)

Chu profiles Sarah Everett, a lesbian student at Harding University, a conservative Church of Christ institution with strict behavioral codes. After coming out, Sarah and friends create HU Queer Press, a zine documenting gay experiences at Harding. The administration blocks the website, inadvertently amplifying its reach through national media coverage. Chu visits Harding and observes its enforced piety—mandatory chapel, rigid gender norms, and a special ministry called Integrity for students with “unwanted same-sex attractions.” Two evening chapels on homosexuality yield surprises: a psychology professor questions whether being gay is truly a choice, and a Bible professor reads a gay student’s email about the pain of being closeted. Yet the university president makes clear that affirming gay students are not welcome.

PART II: STRUGGLING

Chapter V: Exit Strategy, Part I — Exodus International’s Reorientation Ministry

Chu examines Exodus International, the world’s largest ex-gay ministry network. President Alan Chambers claims Exodus no longer preaches conversion to heterosexuality, insisting “the opposite of homosexuality is not heterosexuality. It’s holiness.” Yet Chu finds the website still linked to reparative therapy advocates. Chambers traces his homosexuality to childhood “gender insecurity” and abuse, framing his past gay life as promiscuous bar-hopping—a narrow sample size and subtle equation of gay identity with superficial lifestyle. Cofounder Michael Bussee, who fell in love with another male Exodus volunteer and left in 1979, insists Exodus members are not hateful but are “based in homophobia.” At a 2011 Gay Christian Network panel, Chambers stunned attendees by admitting 99.9% of Exodus participants had not experienced orientation change. Chu reflects on Henri Nouwen’s insight that fixation on human affection distracts from the “life of the heart.”

Chapter VI: Exit Strategy, Part II — A Visit to an Exodus Group (Kirkland, Washington)

Chu visits Tower of Light, an Exodus-affiliated ministry run by Jeff Simunds, a retired Microsoft employee. Jeff enforces strict group rules: no cross-talk, no contact exchange, clinical language only. He attributes his own homosexual desires to a “father wound”—hatred of his distant dad—and credits recovery to forgiving his father through prayer. Chu interviews “Tommy,” a bisexual man whose anonymous sexual encounters led to an HIV diagnosis he believes God miraculously healed, and “Pam,” who left a five-year lesbian relationship after a colleague’s prayer. Supervisor Lupe Maple offers a nuanced counterpoint, acknowledging that “Christian maturity is partly about living in the tension of not knowing.” Bryan, a young man of Chinese descent, remains attracted only to men and mourns a lost connection with another Christian who chose to affirm his sexuality—a poignant mirror of Chu’s own experience.

Bryan’s Story — Grace

Bryan reveals his deep internal conflict: he desires to please God yet honestly yearns for happiness and sexual expression. He identifies as “undeclared”—neither gay nor straight—illustrating the tortured theological somersaults many conservative gay Christians perform. Lupe’s anecdote about a prostitute crying out to God frames the key insight: God meets people wherever they are, before they stop sinning. Chu identifies the word Bryan seeks as “grace” and critiques Exodus leaders for reducing the “gay lifestyle” to a caricature and claiming to determine who qualifies for salvation.

Oral History: John Smid

John Smid, former executive director of Love In Action, recounts his journey from ex-gay leadership to a transformation of heart. After the 2005 Zach Stark controversy exposed the ministry, Smid left in 2008, gradually befriending a protester who had demonstrated against his organization. Attending a gay Christian conference stunned him with its joy and faithfulness. He eventually concluded that Scripture is not clear against loving same-sex relationships, only against immoral, idolatrous ones. He now makes amends for the shame-producing wounds he inflicted, acknowledging his same-sex attraction never diminished despite twenty-two years of marriage.

Chapter VII: Freedom to Marry — Jake and Elizabeth Buechner

Jake Buechner, a gay man pursuing Christian ministry, and Elizabeth, a straight woman, built an improbable mixed-orientation marriage rooted in shared faith, honest communication, and Erich Fromm’s vision of love as labor. Jake’s journey included depression, an Exodus group, and fear of lifelong loneliness before friends suggested marriage. Elizabeth chose the relationship with full knowledge of his attractions. Their courtship involved painful vulnerability: discussions of pornography, attractions to other men, and the admission that giddy romantic love was never their starting point. Both report a satisfying, if unconventional, sex life. Jake views his sexuality as both a gift from God and a “thorn in the flesh.” Chu, a gay man, finds their story confounding yet shaped by Kierkegaardian faith in the absurd.

Chapter VIII: Choosing Celibacy — Kevin Olson (St. Paul, Minnesota)

Kevin Olson, a fifty-seven-year-old Christian, has chosen lifelong celibacy as a homosexually oriented man. His story reveals the profound isolation and daily sacrifices of that choice. Raised in a secular home, Kevin found faith at JCPenney through a coworker’s public prayer. He distinguishes himself as “homosexual-oriented” rather than “gay,” rejecting a lifestyle label. Chu spends three days with Kevin—visiting his church, driving past his childhood home and the Bachmann counseling clinic—and pieces together a portrait of deep loneliness: Kevin has never had intercourse, stopped doing community theater to avoid questions, withdrew from men’s Bible study, and attempted suicide in 1997. He clings to the hope of missionary work in China. Chu, humbled by Kevin’s constancy, concludes that celibacy is not passive inaction but an active, daily series of choices—yet prays for Kevin and kisses his boyfriend that same night.

Oral History: Ted Haggard

Chu’s breakfast interview with Ted Haggard—disgraced former megachurch pastor and National Association of Evangelicals president—begins combatively, with Haggard calling Chu “insignificant” before pivoting into an expansive monologue. Haggard frames his downfall as the price of leadership, positioning himself between Judas and Peter. He argues that homosexuality is today’s “unpardonable sin” the way divorce once was, and insists his new church, St. James, welcomes all. His self-pity is striking: he laments being “punished for serving the Lord.” His rhetoric blends genuine insight about lovelessness in the church with a defensive need for redemption narrative, culminating in his declaration that he is “being resurrected” in the same city where he was “crucified.”

Chapter IX: The Ministry Is the Closet — Ben Dubow (Hartford, Connecticut)

Ben Dubow, a Jew-turned-evangelical who founded a thriving Connecticut church, St. Paul’s Collegiate, lived a double life as a closeted gay pastor for seventeen years. After converting through Young Life and attending reparative therapy, he compartmentalized his sexuality while preaching conservatism on homosexuality—a “thou doth protest too much” dynamic he later recognized. When his sexual partner Max threatened to expose him, Ben was forced out of ministry, losing his job, community, and housing. He fled to J.R. Mahon of XXXchurch, who helped him separate his sexuality crisis from his relationship with Christ, then to pastor Bart Campolo, who helped him begin integrating faith and sexual identity. Now working as a sous-chef and attending an affirming Baptist church, Ben embraces a “spirituality of transparency” that is the antithesis of his hidden past.

Chapter X: Agreeing to Disagree — The Evangelical Covenant Church (Chicago)

This chapter shifts from personal narrative to institutional dynamics, examining the Evangelical Covenant Church as a microcosm of evangelicalism’s broader struggle. Andrew Freeman came out in 2010 on the same day he was consecrated as a missionary, launching the blog Coming Out Covenant, which galvanized debate across the 850-congregation denomination. Charlotte Johnson, a lifelong member, lived under an unspoken “don’t ask, don’t tell” policy with her partner Joan since 1967—until a hostile pastor outed Charlotte at her workplace. Benj Sullivan-Knoff, the teenage son of an ordained Covenant minister, came out as bisexual during high school; his mother eventually told him she would walk away from her ordination before making him carry that burden again. The Covenant’s tradition of “agreeing to disagree” has held on other issues, but homosexuality poses a harder test. Superintendent Howard Burgoyne rejects single-issue litmus tests as “based in fear,” and asks: “What kind of a church do we need to be where it’s actually possible and desirable to come into a community to process these issues together?”

Oral History: Benjamin L. Reynolds

Benjamin L. Reynolds, a Baptist minister in the African-American church, knew from childhood he was called to preach but also that he was different. His father whipped him at age five for wearing his aunt’s pearls. He married a woman largely because he wanted to be a father, but the marriage ended in divorce. After he allowed a lesbian friend’s bio to list “avowed lesbian” in the church bulletin, attendance plummeted. In 2006, his photo landed on the Denver Post after he spoke at a press conference supporting same-sex legal protections; his deacons confronted him, and Reynolds resigned and came out simultaneously. One deacon told him, “Everybody knew you were gay, but I’m mad as hell you told us.” Reynolds is now transferring his credentials to the United Church of Christ, noting that “the white church is who affirmed me.”

PART III: RECONCILING

Chapter XI: What Price, Unity? — First United Lutheran Church (San Francisco)

Chu traces the long history of Christian schism, noting that virtually every denomination results from someone’s conviction that a new church was needed. He examines First United Lutheran Church—expelled from the ELCA in 1995 for ordaining an openly gay pastor, Jeff Johnson, in defiance of denominational policy. Rather than capitulate, First United celebrated with a “Feast of the Expulsion.” The congregation survived two decades outside the denomination, selling its building to a Buddhist temple and becoming a “church without walls.” When the ELCA finally voted in 2009 to permit gay ordination, St. Francis (the co-expelled congregation) rejoined, but First United resisted. Pastor Susan Strouse suggests that sometimes “divorce on good terms is the right thing to do,” and that the broader church should accept that ideological unity may be impossible—people should part with blessings rather than resentments.

Chapter XII: New Community — The Gay Christian Network (Raleigh, North Carolina)

Justin Lee, a devout Southern evangelical who once called himself “God Boy,” founded the Gay Christian Network in 2001 after years of agonizing over his sexuality. GCN’s distinctive mission is welcoming both “Side A” (gay-affirming) and “Side B” (celibacy-required) Christians, refusing to make theology a litmus test. The organization operates on roughly $200,000 per year from member donations, and Justin has never earned more than $28,000. Chu visits GCN’s headquarters and attends Justin’s “Transforming the Conversation” campus tour at Georgia State, where he skillfully moderates dialogue between Side A and Side B students. The chapter also profiles GCN members: “Johnny,” a divinity student leading a double life; Dave and Shane, who met on GCN and married; and Sandy Bochnia, a straight mother who found GCN after pulling her son Stephen out of the closet and now serves as “GCN Mom.” Justin’s core conviction: if the church doesn’t learn to love gay people, “it’s not going to stop gay people from being accepted in society. What it’s really going to do is turn people off Christianity.”

Chapter XIII: Keeping It Together — The Schert Family (Valdosta, Georgia)

This chapter traces the ripple effects of Jan Schert’s midlife coming-out as a lesbian on her family. Jan, a deeply spiritual woman from the Jesus Movement era, fell in love with her friend Judy at forty-three, upending her twenty-three-year marriage to Dan. Dan, rather than vilifying Jan, chose to keep the family together, insisting their four children still have both parents. His mother and brother initially condemned Jan with threats of hell, though years later his brother apologized. Jan’s “dark night of the soul” led her to San Francisco, where a rewritten hymn at a gay-affirming church unlocked years of suppressed grief. She changed her name to Lee, returned to Georgia, and found spiritual nourishment at a progressive Episcopal congregation, while Dan, now remarried, still clings to Romans 8:38–39’s promise that nothing can separate them from God’s love.

Chapter XIV: Return of the Exiles — Lianna Carrera and Jennifer Knapp

Chu profiles two lesbian women who left the church over their sexuality and are now finding their way back. Lianna Carrera, a cradle Baptist and comedienne, was pulled off her Christian school basketball team after a deacon’s “vision” that she’d be a lesbian; her pastor father eventually became an ally, marching in pride parades. Lianna identifies as both Southern Baptist and agnostic, yet finds herself unexpectedly moved by old hymns. Jennifer Knapp, a former CCM star who sold over a million albums, came out at thirty-three and was largely rejected by the Christian music industry. She now tours with her Inside Out Faith project, combining music and comedy with Q&A about faith and sexuality. Bishop Mary Glasspool’s testimony is also included: as the first lesbian bishop elected in a major American denomination, she insists the real issue underlying opposition to homosexuality is about power and gender, not sex.

Chapter XV: A House of Prayer for All People — The Metropolitan Community Church

Chu visits MCC congregations in San Francisco and Las Vegas, exploring the world’s only predominantly gay denomination, founded by Troy Perry in 1968. MCC-SF is radically inclusive—its stained-glass window features symbols of multiple faiths, its membership includes a Druid, a Jew, and atheists. MCC-LV, housed in a strip mall behind a Taco Bell, serves a bilingual population with Catholic influences. Chu appreciates MCC’s history of caring for AIDS victims and welcoming the outcast, but he is troubled by what he sees as an overemphasis on sexuality rather than God. After being hit on during the passing of the peace, he questions whether MCC has become more of a community center than a church. He concludes that while MCC provides crucial refuge, its identity crisis—too narrowly gay for some, too theologically diffuse for others—may threaten its relevance as younger LGBTQ Christians simply attend mainstream churches.

Chapter XVI: Feels Like Home — Highlands Church (Denver)

Chu arrives at Highlands Church, co-pastored by Mark Tidd—a defrocked Christian Reformed Church pastor—and Jenny Morgan, a partnered lesbian. Highlands distinguishes itself by being deeply Christ-centered and biblically engaged while fully welcoming LGBTQ people, rejecting the “lowest-common-denominator theology” accusation often aimed at affirming churches. Chu profiles congregants—Kirk and Eugene (a married couple with kids), Joe Song, Stacy Price, Joey Torres—showing how the church draws denominational immigrants seeking honest theological tension rather than comfortable answers. What makes Highlands exceptional is not its stance on homosexuality but its stance on humanity: encouraging people to bring their whole selves, question boldly, and live in creative tension.

Gideon Eads — The Counseling Session

This section presents raw email correspondence with Gideon Eads, a young gay man from a strict Southern Baptist family in Kingman, Arizona. Gideon recounts a devastating meeting with a church-appointed counselor who reframed his words, imposed a “covenant” banning all contact with gay people and pro-gay theology, and declared that Satan had his claws in him. The counselor dismissed Gideon’s scriptural knowledge and refused to engage with his contextual arguments about biblical passages on homosexuality. In subsequent emails, Gideon describes coming out to supportive church friends, his counselor’s unwillingness to reconsider, and his decision to stop meeting with him. The correspondence reveals how institutional religious power can be wielded to isolate and shame gay Christians.

Chapter XVII: “I Think God Understands” — Gideon Eads in Kingman, Arizona

Chu travels to Kingman to meet Gideon in person. A homeschooled, self-taught cake decorator from a strict Baptist family, Gideon lives in a remote desert town where he dreams of opening a cake shop. He recounts his mother’s disgust toward gay relatives—Febreezing the house after they visit—and contrasts his family’s rigid rule-based faith (banning Harry Potter, Disney) with his own emerging convictions. Gideon takes Chu hiking on desert hillsides where he talks aloud to God, finding clarity and beauty that shrink his problems. Chu draws a parallel to the biblical Gideon—a doubter who demanded signs but became a mighty warrior—and sees in Gideon an openness and fearlessness that inspire his own journey.

CONCLUSION

Conclusion

Chu revisits his former Bible teacher, Mr. Byers, who was forced to resign after being caught with a boyfriend, lost his family, and now identifies as a pantheist—no longer Christian but still humming hymns. This prompts Chu’s three-part diagnosis of the American church’s failures: cowardly pastors who avoid the homosexuality question; corrupted language where even “love” divides rather than connects; and people who behave badly in Christ’s name. He distinguishes between the church and God, arguing that Americans have “Hinduized” Christianity by picking personalized conceptions of God. His faith has shifted—from a private comfort to a shared, necessary love. He values skepticism, citing Nouwen on how community can carry doubters, and ends with a renewed commitment to a God of “unimaginable grace.”

Epilogue

Chu provides updates on several figures: Jake Buechner welcomed a child, Kevin Olson moved to China, Exodus International closed its doors with Alan Chambers apologizing for “undeniable trauma,” and Megan Phelps-Roper left Westboro Baptist Church after a debate about repentance transformed her understanding. Chu married his boyfriend Tristan; his parents did not attend, though his mother later cooked them a thirteen-dish banquet—a gesture of love she couldn’t speak aloud. Gideon provides a final update: after coming out to his parents, his mother collapsed from stress, he was treated as “infected,” and ultimately moved out. His family discovered his participation in the book and cut off contact. He found acceptance at a new church and through Rotaract, but still aches for his family: “I have never stopped loving them.”